Humans Can’t Reliably Tell Real From AI—What That Means For Courts, Claims And Contracts

Generative AI has outpaced the human ability to reliably verify digital files. Every business whose decisions depend on a photo, a video, a voice recording or a document is now operating on a broken assumption.

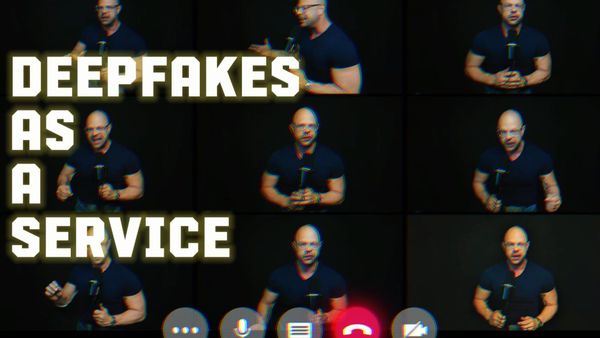

A new episode of the Berkeley Talks podcast featuring Hany Farid, the UC Berkeley School of Information professor and co-founder of GetReal Security, put the trajectory on paper. Farid has studied image manipulation for two decades. He argues the field has been discussing deepfakes for roughly ten years, but the last two years have been different in kind. Static deepfake files that used to take minutes to generate have given way to fully interactive deepfakes that can hold a live conversation in real time. His research, he says, now puts human detection of AI-generated content only slightly better than chance. Tools once limited to well-resourced state actors are now available to billions of ordinary users.

That is not a social media problem. It is every system that runs on data.

The Problem Is Bigger Than The Internet Feed

Courts admit video as evidence. Insurance claims move on photos, recorded statements and telehealth footage. HR investigations rely on call recordings and chat logs. Bank wire approvals clear on voice confirmations. Corporate fraud exams run on invoices, contracts and signatures. Governance disputes and merger closings turn on meeting recordings and diligence documents.

Every one of those processes makes a decision that assumes somebody, somewhere, can look at the evidence and know whether it is real. Farid's research says that basic assumption has broken.

Detection, Authentication And Proof Are Not The Same Thing

Farid built his reputation on detection. Pixel-level analysis of images and video for the signs of manipulation, most visibly on fabricated political videos of Barack Obama and other public figures. That is the work GetReal Security now commercializes. Detection examines the file itself for signs of synthesis. Pixel patterns, compression artifacts, generator fingerprints, physics inconsistencies. The output is a probability score. Scoring is a triage function. It helps large platforms and large claim pipelines manage volume. It is because of the volume that solutions like this are necessary.

But detection is not authentication. Authentication is the question of whether a specific file is what it claims to be. The work traces a file back toward the source that supposedly produced it and tests the chain of custody along the way. The closer the trace gets to the original device or system, the stronger the authentication. Authentication can stand up in a regulatory filing, an internal investigation or an initial dispute.

But authentication is not proof. When the stakes rise and the file is contested in court, the standard rises again. A qualified digital forensic expert goes beyond the file itself to the devices and systems that would have produced or stored it. The phone. The computer. The cloud account. The hospital system. Device-level examination works with records gathered through legal discovery and yields admissible testimony. That is what a verdict should rest on when digital evidence is contested.

Farid’s warning about interactive real-time deepfakes raises the stakes further. A live, synthetic face on a Zoom call or a live, synthetic voice on a phone line often cannot be caught in the moment. When the dispute arrives weeks or months later, the only remaining path to proof runs through the devices and systems that made or captured what was recorded.

These three words, detection, authentication and proof, are being used interchangeably in the current conversation. They are not interchangeable. Businesses that run on the assumption that they are will learn the difference in front of a judge.

What Procedure Actually Looks Like

Every institution that decides anything based on digital files needs a procedure that includes multiple layers of defense.

At intake, automated detection scoring, including the class of tools Farid's research has helped make possible. At review, trained humans applying skepticism rather than trust. At dispute, an escalation path to a qualified digital forensic expert who can authenticate the file and, if necessary, take it into court as admissible evidence.

Without that procedure, disputes get decided by whoever sounds more confident. With it, disputes get decided on what the evidence actually shows. The cost of building these procedures now is a fraction of the cost of losing a single high‑stakes matter because the evidence was never tested.

The Bill Will Not Arrive All At Once

Trust in digital files does not erode in a single event. It erodes case by case, claim by claim, settlement by settlement. A forged contract that nobody caught. A synthetic voicemail that triggered a wire. A deepfake video that a juror believed. Each one is small on its own. Stacked, they are the reason an institution's decisions stop being trusted.

Farid’s research puts a date on a problem that has been drifting toward businesses for years. A California state court recently threw out a case after finding plaintiffs had submitted deepfake videos as evidence. The problem is already inside courtrooms. Institutions that build detection, authentication and forensic escalation into their procedures now are the ones that can still answer when their decisions are challenged. The ones that do not will discover the gap on the way to court, not on the way to the quarterly earnings call.